Misinformation vs. Disinformation

Headlines shout about the latest research findings. TikTok videos show people telling others how to avoid or treat health conditions. Neighbors talk about what their cousin’s husband’s best friend said about a new treatment. How much should you believe and how can you tell the difference between what’s true and what isn’t?

With so much health information available now, it can be hard to sort through what is right and wrong — and what might be in between. However, to be an effective patient advocate for yourself or someone else, not only do you need to know how to tell the difference between correct and incorrect information, but was the incorrect data, counsel or “knowledge” unknowingly passed along (misinformation) or deliberately shared (disinformation). And why is it important to distinguish between the two?

First, it’s essential to understand the significant role that misinformation and disinformation play in healthcare. In 2021, U.S. Surgeon Vivek H. Murthy, MD, said, “Health misinformation is a serious threat to public health. It can cause confusion, sow mistrust, harm people’s health, and undermine public health efforts.”

If it wasn’t apparent before COVID-19 hit the world, it sure is now.

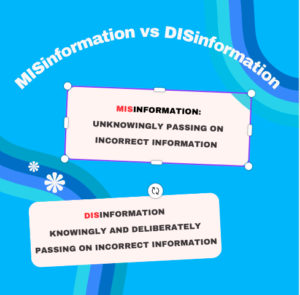

So, what is the difference between misinformation and disinformation?

According to the Centers for Disease Control and Prevention (CDC):

Misinformation is false information shared by people who do not intend to mislead others.

Disinformation is false information deliberately created and disseminated with malicious intent.

It’s not hard to see how misinformation spreads. You’re chatting with a coworker, and she shares some (mis)information she heard from someone she trusts. It makes sense to her, and she has no reason to disbelieve it or do an online search to back it up. While misinformation is still dangerous, there is no malintent. She was not sharing it to spread untruths further, but was trying to be helpful.

Disinformation, on the other hand, is a deliberate move to deceive people and make them believe things that aren’t true. There are many reasons why someone may spread disinformation, from the tobacco companies saying just a few decades ago that cigarettes don’t cause cancer so they can stay in business to conspiracy theorists to people who want to make money through selling so-called cures.

Whether you have received misinformation or disinformation, the result is the same: false information can cause harm.

False, inaccurate, or unsubstantiated?

A false claim is a lie. Aside from the tobacco companies’ claims that smoking is safe, the most famous healthcare lie in recent memory is that the MMR vaccine causes autism. We know that itdoes not. But once the paper claiming the connection was published, the bell was rung and couldn’t be undone, even though the paper was retracted (taken down) and experts worldwide condemned the claim. Thousands of people jumped on the false claim, pushing the anti-vax movement to the forefront. This is a clear case of disinformation. The author knowingly and deliberately published false information and there are still consequences today.

An inaccurate claim may sound like it makes sense but doesn’t provide complete evidence. A good example is the belief that adults should drink eight glasses of water a day to stay hydrated. The nutritionist Frederick J. Stare, PhD, who developed this theory had no supporting evidence that we need to drink that much water. Additionally, he ignored the fact that we can consume a large amount of fluid in our daily diet, lessening the need to drink water if we’re not thirsty.

An inaccurate claim may sound like it makes sense but doesn’t provide complete evidence. A good example is the belief that adults should drink eight glasses of water a day to stay hydrated. The nutritionist Frederick J. Stare, PhD, who developed this theory had no supporting evidence that we need to drink that much water. Additionally, he ignored the fact that we can consume a large amount of fluid in our daily diet, lessening the need to drink water if we’re not thirsty.

An unsubstantiated claim is one that lacks evidence. Here, we could include the claim that ivermectin cures COVID-19 in this category. It doesn’t, but that didn’t stop many people from claiming it does. Clinicaltrials.gov lists multiple ongoing and completed studies of Ivermectin in the treatment of COVID-19.

An Infodemic

Misinformation and disinformation spread so fast now that the World Health Organization coined the term “infodemic.”

“An infodemic is too much information including false or misleading information in digital and physical environments during a disease outbreak. It causes confusion and risk-taking behaviours that can harm health. It also leads to mistrust in health authorities and undermines the public health response.”

There used to be a saying that there is no such thing as too much information. But maybe there really is too much information and that is why it is becoming so hard to understand the issues at hand.

Countering misinformation and disinformation

It’s important to understand that unlike those who deliberately spread disinformation, many people who share misinformation don’t mean to mislead people. When encountering new information from a close friend or colleague, we have to be careful not to ridicule or shame them. That can cause defensiveness and an unwillingness to learn why their information is incorrect. Instead, we need to counter the misinformation by being curious. “Can you share with me where you got this information?” And then counter with “May I share some resources with you that provide a different perspective so that we can talk about this?” We also have to do it in a way that fits their cultural, educational, and emotional backgrounds.

We can’t do it alone, though. Countering health misinformation means government entities, technology platforms, media entities, and educational institutions alike all have to take part.

Public health campaigns:

- Prioritize evidence-based information as a basis for conversations with various populations

- Public service announcements or educational materials targeted specifically toward certain demographic groups

- Fact-checking initiatives that help people identify and dispel myths associated with certain health topics

- Remember that science evolves, so it’s important to share with the public why thoughts have changed. For example, when the pandemic began, there were conflicting reports about if people should wear masks and if so, what kind of masks. As research went on, the recommendations were refined.

Technology platforms (social media sites and search engines):

- Actively work towards collaborating and recognizing misleading content, develop novel algorithms to minimize the spread of misinformation

- Train users how to detect and report inaccurate information, as well as train them how to detect misleading health claims on these

Governments:

- Enact policies and regulations to encourage and regulate responsible dissemination of health information while penalizing those who intentionally promote misleading material

- Offer quick access to reliable sources.

Research institutions and medical professionals:

- Recognize the importance of effective, plain-language communication when countering health misinformation

- Use accessible language that takes account of emotional or cultural influences that could affect how data is understood

- Engage healthcare providers to foster trusting relationships between credible sources and people seeking accurate facts

If you are looking online for health information, please take a minute to read our blog post, Is Dr. Google Reliable?

Disclaimer

The information in this blog is provided as an information and educational resource only. It is not to be used or relied upon for diagnostic or treatment purposes.

The blog does not represent or guarantee that its information is applicable to a specific patient’s care or treatment. The educational content in this blog is not to be interpreted as medical advice from any of the authors or contributors. It is not to be used as a substitute for treatment or advice from a practicing physician or other healthcare professional.